When working with data in Python, it’s not uncommon to encounter duplicates.

Whether you’re working with a list, a pandas DataFrame, or some other data structure, removing duplicates can be an important step in your data cleaning process.

In this article, we’ll take a look at a few quick and easy ways to drop duplicates in Python.

Removing Duplicates from a List

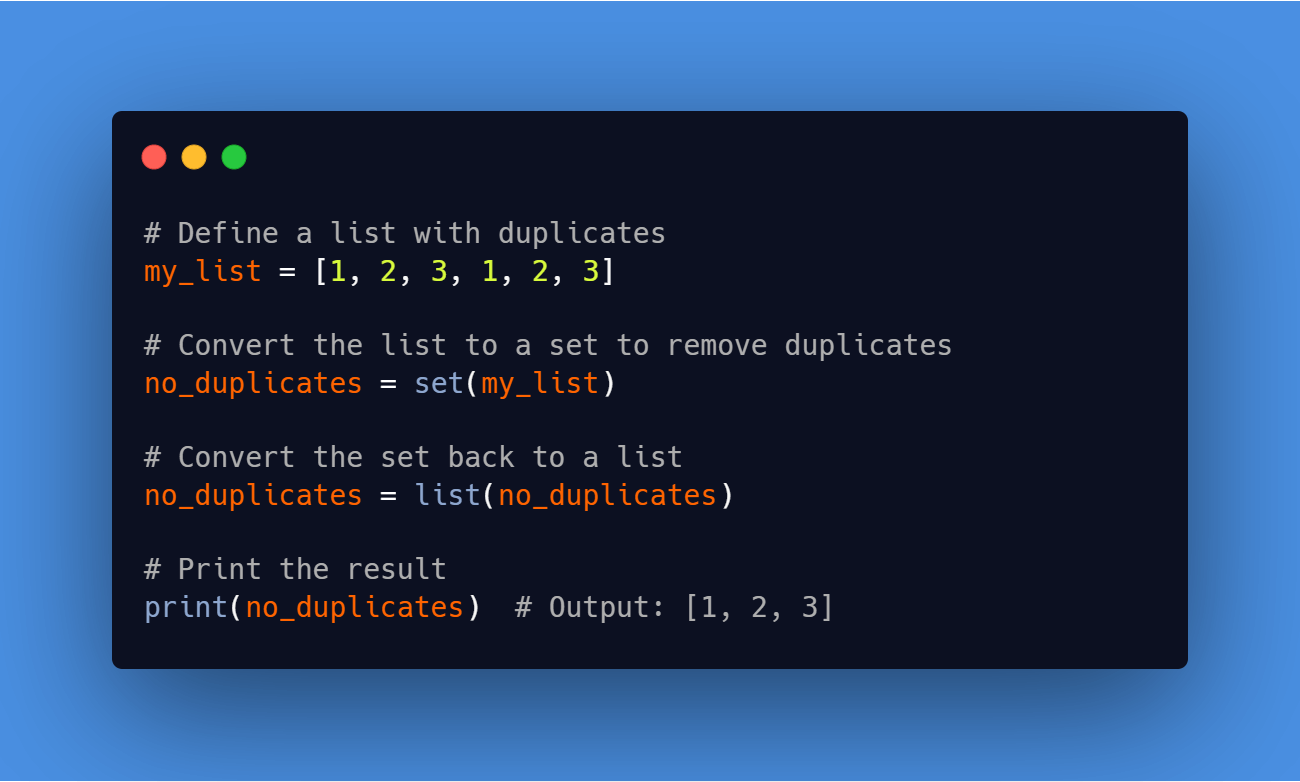

The most basic way to remove duplicates from a list is to convert it to a set and then back to a list.

A set is a data structure that does not allow duplicates, so converting a list to a set will automatically remove any duplicates.

Here’s an example of how to do this:

# Define a list with duplicates

my_list = [1, 2, 3, 1, 2, 3]

# Convert the list to a set to remove duplicates

no_duplicates = set(my_list)

# Convert the set back to a list

no_duplicates = list(no_duplicates)

# Print the result

print(no_duplicates) # Output: [1, 2, 3]

Code language: PHP (php)This method works well if you don’t need to preserve the order of the elements in your list.

If you do need to preserve the order, you can use a for loop to iterate through the list and add unique elements to a new list:

# Define a list with duplicates

my_list = [1, 2, 3, 1, 2, 3]

# Create a new list to store the unique elements

no_duplicates = []

# Iterate through the list and add unique elements to the new list

for element in my_list:

if element not in no_duplicates:

no_duplicates.append(element)

# Print the result

print(no_duplicates) # Output: [1, 2, 3]

Code language: PHP (php)Removing Duplicates from a Pandas DataFrame

If you’re working with data in a pandas DataFrame, you can use the DataFrame.drop_duplicates function to remove duplicates. This function takes a few optional arguments, including:

subset: a list of column names to consider when determining duplicates. If you don’t specify this argument, the function will consider all columns.keep: either"first"(to keep the first occurrence of each duplicate row) or"last"(to keep the last occurrence). By default, the function will keep the first occurrence.inplace: a boolean indicating whether to modify the original DataFrame or create a new one. By default, the function will return a new DataFrame.

Here’s an example of how to use the drop_duplicates function to remove duplicates from a DataFrame:

import pandas as pd

# Define a DataFrame with duplicates

df = pd.DataFrame({"A": [1, 2, 3, 1, 2, 3], "B": [4, 5, 6, 4, 5, 6]})

# Drop duplicates

df = df.drop_duplicates()

# Print the result

print(df)

Code language: PHP (php)This will create a new DataFrame with the duplicate rows removed. The resulting DataFrame will look like this:

A B 0 1 4 1 2 5 2 3 6

If you want to keep the last occurrence of each duplicate row instead of the first, you can use the keep argument:

import pandas as pd

# Define a DataFrame with duplicates

df = pd.DataFrame({"A": [1, 2, 3, 1, 2, 3], "B": [4, 5, 6, 4, 5, 6]})

# Drop duplicates, keeping the last occurrence

df = df.drop_duplicates(keep="last")

# Print the result

print(df)

Code language: PHP (php)This will create a new DataFrame with the duplicate rows removed, keeping only the last occurrence:

A B 3 1 4 4 2 5 5 3 6

If you want to only consider a subset of columns when determining duplicates, you can use the subset argument. For example, suppose you have a DataFrame with three columns, A, B, and C, and you only want to consider duplicates in the A and B columns:

import pandas as pd

# Define a DataFrame with duplicates

df = pd.DataFrame({"A": [1, 2, 3, 1, 2, 3], "B": [4, 5, 6, 4, 5, 6], "C": [7, 8, 9, 10, 11, 12]})

# Drop duplicates, considering only the A and B columns

df = df.drop_duplicates(subset=["A", "B"])

# Print the result

print(df)

Code language: PHP (php)This will create a new DataFrame with the duplicate rows removed, considering only the A and B columns:

A B C 0 1 4 7 1 2 5 8 2 3 6 9

Conclusion

In this article, we’ve looked at a few quick and easy ways to drop duplicates in Python. Whether you’re working with a list or a pandas DataFrame, there are a number of options available to help you remove duplicates and clean up your data.

Leave a Reply